AIME G8000 - Multi GPU HPC Rack Server

The AIME G8000 is an enterprise Deep Learning server based on the Gigabyte G482-Z54 barebone, configurable with up to 8 of the most advanced deep learning accelerators and GPUs to enter the Peta FLOPS HPC computing area with more then 8 Peta TensorOps Deep Learning performance. The G8000 is the ultimate multi-GPU server: Dual EPYC CPU, the fastest PCI 4.0 bus speeds, up to 100 GBE network connectivity and up to 2 TB main memory.

Built to perform 24/7 at your inhouse data center or co-location for most reliable high performance computing.

This product is currently unavailable.

ReserveAIME G8000 - Deep Learning Server

If you are looking for a server specialized in maximum deep learning training, inference performance and for the highest demands in HPC computing, the AIME G8000 multi-GPU 4U rack server takes on the task of delivering.

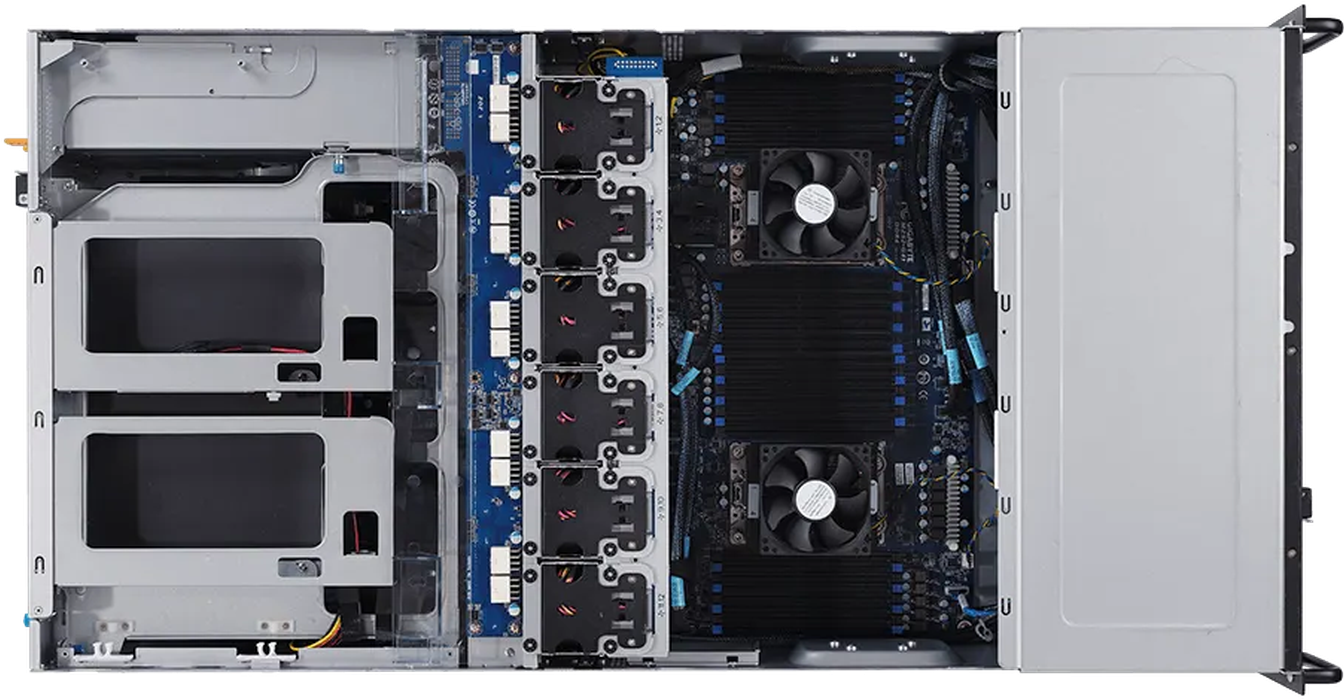

The AIME G8000 is based on the new Gigabyte G482-Z54 barebone which is powered by two AMD EPYC™ Milan processors, each with up to 64 cores. Totaling a CPU performance of up to 256 parallel computable CPU threads.

Its GPU-optimized design with high air flow cooling allows the use of eight high-end double-slot GPUs like the NVIDIA A100, RTX 3090 Turbo, RTX A6000, Tesla or Quadro GPU models.

Definable GPU Configuration

Choose the desired configuration among the most powerfull NVIDIA GPUs for Deep Learning:

Up to 8x NVIDIA A100

The Nvidia A100 is the flagship of Nvidia Ampere processor generation. With its 6912 CUDA cores, 432 Third-generation tensor cores and 80 GB of highest bandwith HBM2 memory a single A100 is breaking the Peta TOPS performane barrier. 4 of those add up to more than 1000 teraFLOPS fp32 performance.

Up to 8x NVIDIA RTX 3090 Turbo

The GeForce RTX™ 3090 is a big ferocious GPU (BFGPU) with TITAN class performance. It’s powered by NVIDIA's Ampere 2nd gen RTX architecture — doubling down AI performance with 10496 CUDA cores, 328 Third-generation Tensor Cores, and new streaming multiprocessors. It features 24 GB of GDDR6X memory.

Up to 8x Tesla V100S

The Tesla V100S is the flagship of the Volta GPU processor series which takes on any Turing based GPU. With its 640 Tensor Core and 32 GB of highest bandwith HBM2 memory the Tesla V100 was the first beyond 100 teraFLOPS (TFLOPS) GPU available.

Up to 8x NVIDIA RTX A6000

The NVIDIA RTX A6000 is the Ampere based refresh of the Quadro RTX 6000. It features the same GPU processor (GA-102) as the RTX 3090 but with all processor cores enabled. It even outperforms the RTX 3090 with its 10752 CUDA and 336 Third-generation tensor cores. With the double amount of GPU memory compared to the Quadro RTX 6000 and the RTX 3090: 48 GB GDDR6 ECC. The NVIDIA RTX A6000 is currently the 2nd fastest available NVIDIA GPU, only topped by the NVIDIA A100, with the largest available GPU memory, best suited for the most compute and memory demanding tasks.

All NVIDIA GPUs are supported by NVIDIA’s CUDA-X AI SDK, including cuDNN, TensorRT which power nearly all popular deep learning frameworks.

Dual EPYC CPU Performance

The high-end AMD EPYC CPU designed for servers delivers up to 64 cores with a total of 128 threads per CPU with an unbeaten price performance ratio.

The available 2x 128 PCI 4.0 lanes of the AMD EPYC CPU allow highest interconnect and data transfer rates between the CPU and the GPUs and ensures that all GPUs are connected with full x16 PCI 4.0 bandwidth.

A large amount of available CPU cores can improve the performance dramatically in case the CPU is used for preprocessing and delivering of data to optimaly feed the GPUs with workloads.

Up to 16 TB High-Speed SSD Storage

Deep Learning is most often linked to high amount of data to be processed and stored. A high throughput and fast access times to the data are essential for fast turn around times.

The AIME G8000 can be configured with up to two exchangeable U.2 NVMe triple level cell (TLC) SSDs with a capacity of up to 8 TB each, which adds up to a total capacity of 16 TB of fastest SSD storage.

Since each of the SSDs is directly connected to the CPU and the main memory via PCI 4.0 lanes, they achieve consistently high read and write rates of 3000 MB/s.

As usual in the server sector, the SSDs have an MTBF of 2,000,000 hours and a 5-year manufacturer's guarantee.

High Connectivity and Management Interface

Additionally to the standard 2x 1 Gbit/s and 1x 10 Gbit/s SFP+ LAN ports the G8000 is fitted with up to 2x 100 Gbit/s (GBE) network adapter for highest interconnect to NAS resources and big data collections. Also for data interchange in a distributed computing setup the highest available LAN connectivity is a must have.

The AIME G8000 is completely remote manageable through IPMI/BMC (AST2500) which makes a successful integration of the AIME G8000 into larger server clusters possible.

Optimized for Multi GPU Server Applications

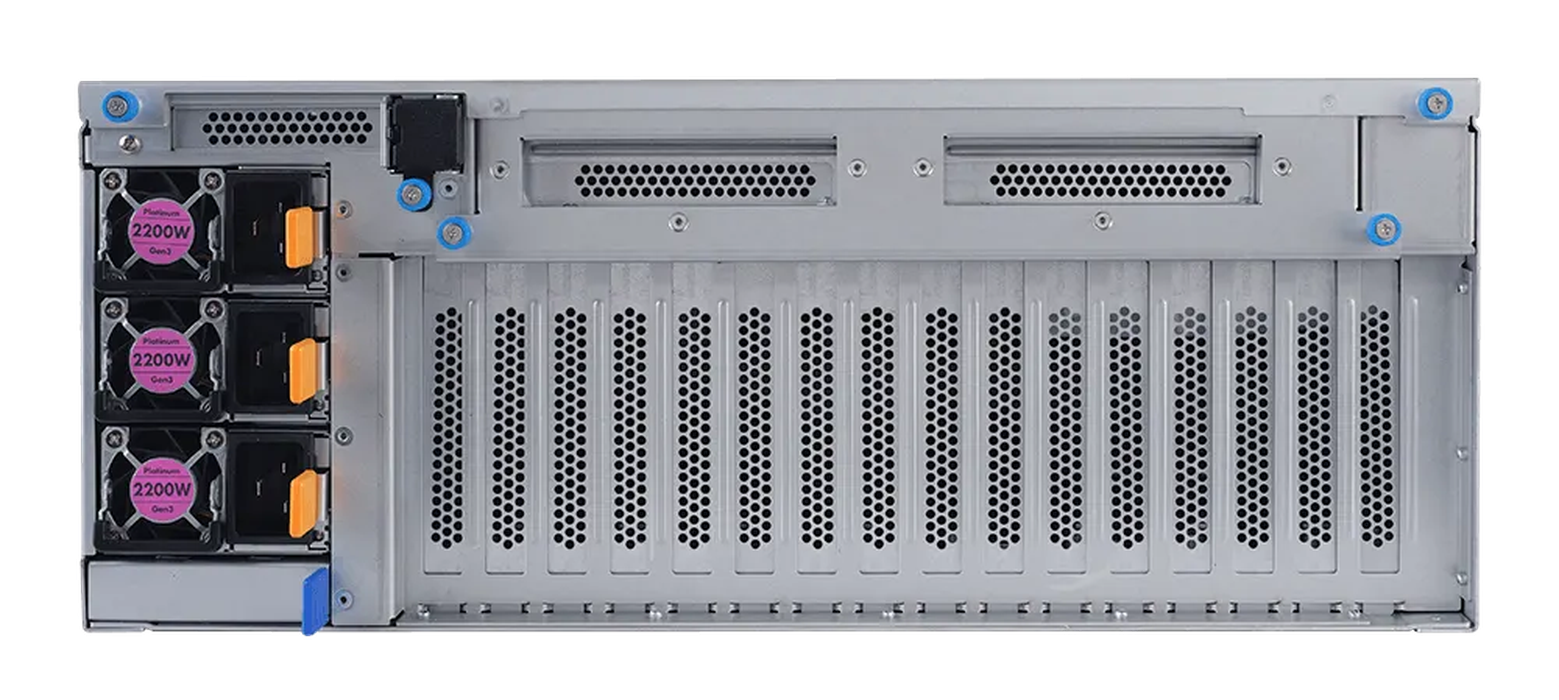

The AIME G8000 offers energy efficiency with redundant platinum power supplies, which enable long time fail-safe operation.

Its thermal control technology provides more efficient power consumption for large-scale environments.

All setup, configured and tuned for perfect Multi GPU performance by AIME.

The G8000 comes with preinstalled Linux OS configured with latest drivers and frameworks like Tensorflow, Keras, PyTorch and Mxnet. Ready after boot up to start right away to accelerate your deep learning applications.

Technical Details

| Type | Rack Server 4U, 90cm depth |

| CPU (configurable) |

EPYC Milan 2x EPYC 7313 (16 cores, 3.0 / 3.7 GHz) 2x EPYC 7443 (24 cores, 2.85 / 4.0 GHz) 2x EPYC 7543 (32 cores, 2.8 / 3.7 GHz) 2x EPYC 7713 (64 cores, 2.0 / 3.6 GHz) |

| RAM | 128 / 256 / 512 / 1024 / 2048 GB ECC memory |

| GPU Options |

2 to 8x NVIDIA A100 80GB or 2 to 8x NVIDIA RTX 3090 24GB or 2 to 8x NVIDIA RTX A5000 24GB or 2 to 8x NVIDIA RTX A6000 48GB or 2 to 8x Tesla V100S 32GB |

| Cooling | CPU and GPUs are cooled with an air stream provided by 12 high performance (21.000 RPM) fans > 100000h MTBF |

| Storage | Up to 2 x 8TB U.2 NVMe SSD Tripple Level Cell (TLC) quality 3000 MB/s read, 3000 MB/s write MTBF of 2,000,000 hours and 5 years manufacturer's warranty |

| Network |

2 x 1 GBit LAN RJ45 1 x IPMI LAN optional: 2 x 10 GBit LAN RJ45 2 x 10 GBit LAN SFP+ 2 x 100 GBE 2x QSFP28 |

| USB | 2 x USB 3.0 ports (front) |

| PSU | 3 x 2200 Watt redundant power 80 PLUS Platinum certified (94% efficiency) |

| Noise-Level | 88dBA |

| Dimensions (WxHxD) | 448mm x 176mm x 880mm (4U)

17.6" x 6.92" x 34.65" |

| Operating Environment | Operation temperature: 10℃ ~ 35℃

Non operation temperature: -40℃ ~ 70℃ |