Overview of the benchmarked GPUs

Although we only tested a small selection of all the available GPUs, we think we covered the GPUs that are currently best suited for deep learning training, model fine tuning and inference tasks due to their compute and memory capabilities and their compatibility to current deep learning frameworks, namely PyTorch and TensorFlow.

For reference also the iconic deep learning GPUs RTX 2080 Ti and Tesla V100 are included to visualize the increase of compute performance over the recent years.

Tesla V100

Suitable for: Servers

Launch Date: 2017.05

Architecture: Volta

VRAM Memory (GB): 16 (HBM2)

Cuda Cores: 5.120

Tensor Cores: 640

Power Consumption (Watt): 250

Memory Bandwidth (GB/s): 900

Geforce RTX 2080 TI

Suitable for: Workstations

Launch Date: 2018.09

Architecture: Turing

VRAM Memory (GB): 11 (DDR6)

Cuda Cores: 5342

Tensor Cores: 544

Power Consumption (Watt): 260

Memory Bandwidth (GB/s): 616

Geforce RTX 3090

Suitable for: Workstations

Launch Date: 2020.09

Architecture: Ampere

VRAM Memory (GB): 24 (GDDR6X)

Cuda Cores: 10496 Tensor Cores: 328

Power Consumption (Watt): 350

Memory Bandwidth (GB/s): 936

RTX A5000

Suitable for: Workstations/Servers

Launch Date: 2021.04

Architecture: Ampere

VRAM Memory (GB): 24 (GDDR6)

Cuda Cores: 8192

Tensor Cores: 256

Power Consumption (Watt): 230

Memory Bandwidth (GB/s): 768

RTX A6000

Suitable for: Workstations/Servers

Launch Date: 2020.10

Architecture: Ampere

VRAM Memory (GB): 48 (GDDR6)

Cuda Cores: 10.752

Tensor Cores: 336

Power Consumption (Watt): 300

Memory Bandwidth (GB/s): 768

AMD Instinct MI100

Suitable for: Servers

Launch Date: 2020.11

Architecture: CDNA (1)

VRAM Memory (GB): 32 (HBM2)

Stream Processors: 7.680

Power Consumption (Watt): 250

Memory Bandwidth (TB/s): 1.2

Geforce RTX 4060 TI

Suitable for: Workstations

Launch Date: 2023.07

Architecture: Ada Lovelace

VRAM Memory (GB): 16 (GDDR6)

Cuda Cores: 4352

Tensor Cores: 136

Power Consumption (Watt): 165

Memory Bandwidth (GB/s): 288

Geforce RTX 4090

Suitable for: Workstations

Launch Date: 2022.10

Architecture: Ada Lovelace

VRAM Memory (GB): 24 (GDDR6X)

Cuda Cores: 16.384

Tensor Cores: 512

Power Consumption (Watt): 450

Memory Bandwidth (GB/s): 1008

RTX 4500 Ada

Suitable for: Servers

Launch Date: 2023.08

Architecture: Ada Lovelace

VRAM Memory (GB): 23 (GDDR6)

Cuda Cores: 7.680

Tensor Cores: 240

Power Consumption (Watt): 210

Memory Bandwidth (GB/s): 432

RTX 5000 Ada

Suitable for: Servers

Launch Date: 2023.08

Architecture: Ada Lovelace

VRAM Memory (GB): 32 (GDDR6)

Cuda Cores: 12.800

Tensor Cores: 400

Power Consumption (Watt): 250

Memory Bandwidth (GB/s): 576

RTX 6000 Ada

Suitable for: Workstations/Servers

Launch Date: 2022.09

Architecture: Ada Lovelace

VRAM Memory (GB): 48 (GDDR6)

Cuda Cores: 18.176

Tensor Cores: 568

Power Consumption (Watt): 300

Memory Bandwidth (GB/s): 960

NVIDIA L40S

Suitable for: Servers

Launch Date: 2022.09

Architecture: Ada Lovelace

VRAM Memory (GB): 48 (GDDR6)

Cuda Cores: 18.176

Tensor Cores: 568

Power Consumption (Watt): 300

Memory Bandwidth (GB/s): 864

Geforce RTX 5090

Suitable for: Workstations

Launch Date: 2025.02

Architecture: Blackwell

VRAM Memory (GB): 32 (GDDR7)

Cuda Cores: 21.760

Tensor Cores: 680

Power Consumption (Watt): 575

Memory Bandwidth (GB/s): 1800

RTX PRO 6000 Blackwell Workstation Edition

Suitable for: Workstations

Launch Date: 2025.05

Architecture: Blackwell

VRAM Memory (GB): 96 (GDDR7)

Cuda Cores: 24.064

Tensor Cores: 752

Power Consumption (Watt): 600

Memory Bandwidth (GB/s): 1792

A100

Suitable for: Servers

Launch Date: 2020.05

Architecture: Ampere

VRAM Memory (GB): 40/80 (HBM2)

Cuda Cores: 6.912

Tensor Cores: 512

Power Consumption (Watt): 300

Memory Bandwidth (GB/s): 1935 (80 GB PCIe)

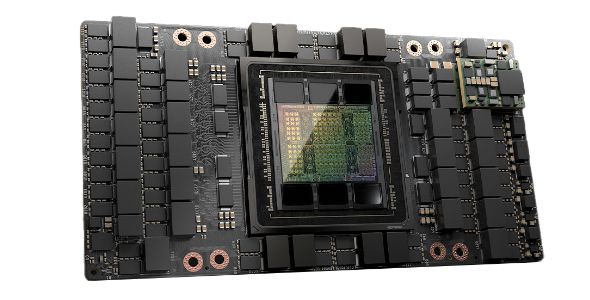

H100 80GB

Suitable for: Servers

Launch Date: 2022.10

Architecture: Hopper

VRAM Memory (GB): 80 (HBM2)

Cuda Cores: 14.592

Tensor Cores: 456

Power Consumption (Watt): 350

Memory Bandwidth (GB/s): 2000

H100 NVL

Suitable for: Servers

Launch Date: 2023.07

Architecture: Hopper

VRAM Memory (GB): 94 (HBM3)

Cuda Cores: 14592

Tensor Cores: 456

Power Consumption (Watt): 400

Memory Bandwidth (GB/s): 3900

H200 NVL

Suitable for: Servers

Launch Date: 2024.11

Architecture: Hopper

VRAM Memory (GB): 141 (HBM3e)

Cuda Cores: 16.896

Tensor Cores: 528

Power Consumption (Watt): 600

Memory Bandwidth (GB/s): 4800

H200 SXM

Suitable for: HGX Servers

Launch Date: 2024.11

Architecture: Hopper

VRAM Memory (GB): 141 (HBM3e)

Cuda Cores: 16.896

Tensor Cores: 528

Power Consumption (Watt): 700

Memory Bandwidth (GB/s): 4800

The Deep Learning Benchmark

For benchmarking the GPUs the training performance of the language model BERT Large, in trained sequences per second and the visual recognition ResNet50 model (version 1.5) in trained images per seconds, are used.

Read more about these classic deep learning networks here:

BERT Model

We used the the variant "BERT large cased" for our benchmarks. BERT large is a transformer model consisting of 24 layers, 1024 hidden dimensions, 16 attention heads, and a total of 335 million parameters. Cased means, that the used BERT version differentiates between upper and lowercase characters in the input layer.

BERT stands for Bidirectional Encoder Representations from Transformers and is a deep learning model for natural language processing, developed by Google in 2018. It uses the Transformer architecture to train on a large amount of unlabeled text to build an in context relation of natural language by blanking out random words in a text and let the model try to determine the correct words that fit in the gaps. Through this training, BERT can identify context-specific meanings of words and distinguish ambiguous expressions. The special feature of BERT is its bidirectional modeling. Unlike previous language models that only considered the preceding words as context, BERT models the context bidirectionally. This means that BERT uses both the preceding and following words in the text to interpret the meaning of a particular word.

ResNet-50 Model

The ResNet-50 model version 1.5, used for our benchmarks, consists of 48 convolutional layers, as well as a MaxPool and an Average-Pool layer, for a total of 48+1+1=50 layers with a total of 25 million parameters. As it is used in many benchmarks, an almost optimal implementation is available that draws maximum performance from the GPU and shows where the actual compute limits of the hardware are.

A Residual Neural Network, or ResNet, was first introduced in 2015 for image classification. ResNet is considered one of the first truly deep learning networks. It solved the problem of vanishing/exploding gradients that occurred in previously used perceptron network structures when the number of intermediate layers was increased (see Deep Residual Learning for Image Recognition). The characteristic feature of residual networks is the use of "skip connections" between different layers, allowing individual layers to be skipped. This allows much deeper networks to be formed and solves the problem of vanishing/exploding gradients.

We use a PyTorch implementation for benchmarking the training performance on these networks. PyTorch has evolved into the most popular deep learning framework and is the defacto standard in research, with huge support in the open source community.

With the compile mode introduced in PyTorch 2.0, PyTorch closed its performance gap to other frameworks and became also the framework that can achieve the best training and inference performance on GPUs.

Compile Mode

The release of PyTorch 2.0 in march 2023 introduced a number of significant changes to improve performance, support dynamic shapes and distributed training. One major performance feature of PyTorch 2 is the introduction of torch.compile as the main API for PyTorch 2. This feature wraps your model and returns a model compiled to the specific GPU and optimizing it to the available instruction set. This gives much better performance by utilizing the specific features of the GPU architecture. This is fully additive and optional, making PyTorch 2 backward compatible. In most cases this can be done by simply adding one line of code:

model = torch.compile(model)

In this benchmark the training speed with fp32 and fp16 AMP (automatic mixed precision) tensor resolutions were bench-marked. These resolutions are still the recommended resolutions to train models of small and moderate size (below 10B parameters).

Numerical Precision: fp32/AMP

The numerical precision used for computing the weights and associated values in deep learning models plays a significant role in the training process. Higher precision enables finer weight adjustments, but it also requires more memory and slows down computation.

In our benchmarks, we examine the performance of "fp32" data types and calculations that utilize the "Automatic Mixed Precision" technique.

The "fp32" (floating point 32-bit) data type is the most widely used standard in deep learning. It uses a 32-bit encoding, consisting of 1 bit for the sign, 8 bits for the exponent, and 23 bits for the mantissa.

Automatic Mixed Precision (AMP) is a technique that is becoming increasingly popular in the deep learning community. It involves using different numerical precisions (such as fp8, fp16, fp32, and fp64) during the training process to improve the efficiency and accuracy of deep learning models. The idea behind AMP is that some parts of the model are more sensitive to numerical precision than others. By using higher precision where necessary and lower precision where less important, calculations can be made faster and more efficiently overall without compromising the model's accuracy. However, implementing Automatic Mixed Precision can be complex and often requires special hardware support.

The comparison of the GPUs has been made using synthetic random image and text data, to minimize the influence of external elements like the type of dataset storage (SSD or network), data loader and data format.

The Python PyTorch scripts used for the benchmark are available on Github here.

The Testing Environment

As AIME offers server and workstation solutions for deep learning tasks, we used our AIME A4004 server and our AIME G500 Workstation for the benchmark.

The AIME A4004 server and AIME G500 workstation are elaborated environments to run high performance multiple GPUs by providing sophisticated power and cooling, necessary to achieve and hold maximum performance and the ability to run each GPU in a PCIe 5.0 x16 slot directly connected to the CPU.

The technical specs to reproduce our benchmarks are:

A) For server compatible GPUs:

AIME A4004 Rack Server AMD EPYC 7553 (32 cores), 256 GB DDR5 ECC memory

b) For GPUs only available for workstations:

G500 Workstation, AMD Threadripper Pro 7975WX (32 cores), 256 GB DDR5 ECC memory

Using the AIME Machine Learning Container (MLC) management framework with the following setup:

- Ubuntu 22.04 LTS

- NVIDIA driver version 570.133.7

For NVIDIA cards of the Turing, Ampere, Ada and Hopper generation:

- CUDA 12.4

- CUDNN 9.1.0

- PyTorch 2.5.1 (official build)

For NVIDIA cards of the Blackwell generation:

- CUDA 12.8

- CUDNN 9.7.1

- PyTorch 2.7.0 (official build)

The AMD GPU in the benchmark, the AMD Instinct MI100, was tested with:

- ROCM 6.2

- MIOpen 2.19.0

- PyTorch 2.5.1 (official build)

Single GPU Performance

The results of BERT Large performance measurements is the average of trained sequences per second that could be trained while running for 50 steps at the specified batch size. The average of three runs were taken, the start temperature of all GPUs was below 50° Celsius.

As second benchmark the results of RESNet50 performance measurements is the average of trained images per second that could be trained while running for 50 steps at the specified batch size.

The two use cases benchmarked show quite similar results in the ranking of GPUs only some reordering in the lower ranks takes place as some GPUs are benefiting from the more memory bandwidth constrained RESNet model than the more compute constrained BERT model. On the high end GPUs and accelerators the ranking is clear.

One also can see that using the compile mode is mandatory to utilize the full performance of the GPUs and accelerators, especially on high end accelerator cards it gives a huge performance boost. The mixed precision fp16/AMP option doubles the performance on most GPUs and accelerators.

Multi GPU Deep Learning Training Performance

The next level of deep learning performance is to distribute the work and training loads across multiple GPUs. Deep learning does scale very well across multiple GPUs as they can compute in parallel for most time and only have to exchange data after each back propagation step to average and exchange the gradient changes.

The AIME A4004 and the AIME G500 support up to four server capable GPUs.

How does Multi-GPU Deep Learning Training work?

The method of choice for multi GPU scaling is to spread the batch across the GPUs. Therefore the effective (global) batch size is the sum of the local batch sizes of each GPU in use. Each GPU does calculate the back propagation for the applied inputs of the batch slice. The back propagation results of each GPU are then summed and averaged. The weights of the model are adjusted accordingly and have to be distributed back to all GPUs.

Concerning the data exchange, there is a peak of communication happening to collect the results of a batch and adjust the weights before the next batch can be calculated. While the GPUs are working on calculation a batch not much or no communication at all is happening across the GPUs.

In this standard solution for multi GPU scaling one has to make sure that all GPUs run at the same speed, otherwise the slowest GPU will be the bottleneck for which all GPUs have to wait for. Therefore mixing of different GPU types is not useful!

The next charts show how well the RTX 6000 Ada, RTX 4090 and RTX 5090 scale in multi-GPU setups when using fp32 and fp16 mixed precision calculations.

A good linear and constant scale factor of around 0.94 to 0.95 is reached, meaning that each additional RTX 6000 Ada GPU adds around 95% of its theoretical linear performance. The similar scale factor is obtained employing fp16 mixed precision training. Note the values in the graph: a 2x RTX 6000 Ada configuration delivers similar performance as a single H100 80GB accelerator.

The RTX 6000 Ada, as all NVIDIA Pro GPUs, can utilize Peer-2-Peer PCIe Transfer to transfer data directly between the GPUs.

For the RTX 5090 the Peer-2-Peer PCIe Transfer is disabled by NVIDIA. But the transfer through PCIe 5.0 still achieves a good scaling factor between 0.91 and 0.97.

With the RTX 4090 we find a different situation. While the single GPU performance is solid, the multi-GPU performance of the RTX 4090 is falling short. As shown in the chart below, the scale factor of the second RTX 4090 is only 0.62 to 0.75 which is not good for a reasonable Multi-GPU setup.

The limiting of the transfer speed is probably an intended market segmentation by NVIDIA to separate the Pro-GPUs from the cheaper NVIDIA GeForce 'consumer' series not to be used in Multi-GPU setups.

Conclusions

Compile Mode is mandatory to utilize the full performance

Especially on high end accelerator cards it gives a huge performance boost of factor 1.5 to 4!

Mixed Precision can speed-up the training by more than factor 2

A feature definitely worth a look in regards of performance is to switch training from float 32 precision to mixed precision training. Getting a performance boost by adjusting software depending on your constraints could probably be a very efficient move to increase the performance.

Multi GPU scaling is more than feasible

Deep Learning performance scales well with multi GPUs for at least up to 8 GPUs: 2 GPUs can often outperform the next more powerful GPU model in regards of price and performance.

Mixing of different GPU for Multi-GPU setups is not useful

The slowest GPU will be the bottleneck for which all GPUs have to wait for!

The best GPU for Deep Learning?

Performance and GPU memory size are for sure the most important aspect of a GPU used for deep learning tasks but also performance in relation to required power and form factor have to be taken in consideration.

So it highly depends on your requirements. Here are our assessments for the most promising deep learning GPUs:

RTX A5000

The RTX A5000 is still a decent entry card for deep learning training, machine learning and inference tasks. It has a very good energy efficiency with a similar performance as the legendary but more power hungry, graphic card flagship of the NVIDIA Ampere generation, the RTX 3090.

RTX 5000 Ada

The RTX 5000 Ada is one of the later additions to the NVIDIA Ada Love Lace series and is positioned as successor of the RTX A5000. It is a good replacement but offers only a little performance upgrade to the RTX A5000 in fp32 and fp16 workloads. The bottleneck of the RTX 5000 Ada is its low memory bandwidth of only 576 GB/s. On the plus side it has 32 GB GDDR6 memory and support for fp8 computation, which can enable larger batch size and to load larger models in some use cases.

RTX 4090 / 5090

The high end NVIDIA consumer GPUs of the Ada Lovelace and Blackwell generation. Their single GPU performance is outstanding also due their high power budget.

The multi-GPU performance is falling short of potential as shown above, but this is not a hindrance at inference use cases, where each GPU card can fit the model into memory and deliver its full performance as no communication between the GPUs is necessary.

A multi-GPU setup with more than two RTX 4090s doesn't seem to be an efficient way to scale.

The RTX 5090 seems currently not limited as strict as the RTX 4090 in multi-GPU setups!

RTX 6000 Ada / L40S

The pro versions of the RTX 4090 with double the GPU memory (48 GB) and very solid performance with moderate power requirements. Currently the scalable all-round GPU with best top performance / price ratio, also capable to be used in large language model inference setups.

The RTX 6000 Ada is currently still the fastest available card for quad Multi-GPU workstation setups.

On first look the L40S seems to be the server only passive cooled version of the RTX 6000 Ada, since the GPU processor specifications appear to be the same. But it has a detail disadvantage: about 10% lower memory bandwidth, affecting its possible performance in this benchmarks.

NVIDIA H100 NVL

The successor of the H100 80GB with about 30% higher performance and 14 GB additional memory. This is achieved with faster and more dense HBM3e memory and a higher power budget of 450 Watts.

The 450 Watts power is still a compromise to the 600/700 Watts per GPU of a H200 NVL or DGX/HGX server. A possible better rack utilization, lower energy and cooling costs can become a factor to consider in 24/7 setups.

An octa (8x) NVIDIA H100 NVL setup, as possible with the AIME A8004, catapults one into multi petaFLOPS HPC computing area.

NVIDIA H200 NVL / SXM

In case the most performance regardless of price and highest performance density is needed, the NVIDIA H200 is currently the first choice: it delivers high end deep learning and inference performance.

The 141 GB HBM3e memory is the key value of the H200 for scaling with larger models.

The H200 NVL as PCIe 5.0 card solution is available in 1 to 8 GPU configurations within the AIME A8005 server. The performance is nearly on a par with the H200 SXM version although it has 100 Watt lower power requirement. The H200 NVL can be equipped with NVLINK bridges to realize 4-way NVLINK transfer of 900 GB/s between four of this type of accelerator.

The H200 SXM is to be used in HGX servers like the AIME GX8-H200 and is only available as 8x GPU solution with full NVLINK interconnect between all H200 SXM GPUs.

The AIME GX8-H200 server is the cluster solution to scale GPU computing across multiple servers where each GPU can be connected through a high speed 400 GBE LAN switch to directly exchange data with all other GPUs in the cluster.

This article will be updated with new additions and corrections as soon as available.

Questions or remarks? Please contact us under: hello@aime.info