Thanks to the deep learning developer framework AIME-MLC, the installation and operation of such a model like Stable Diffusion is possible without much effort and free of charge. The installation process and the basics of Stable Diffusion are described below.

Stable Diffusion is a Deep Learning Text-to-Image Model. In short, this model generates detailed images based on text descriptions. It is similar to previously released models such as DALL-E 2, Midjourney, or Imagen. However, Start-up Stability.ai published this model 2022 as a freely usable diffusion model for automated image content generation using AI. With the use of our AIME machine learning containers, it takes just a couple of minutes to set it up and run locally on your own server or workstation - provided a correspondingly powerful GPU is available. These ML-Containers take care of the hassle of managing the different driver versions and libraries for setting up your framework. More info about our container framework is found on the AIME MLC blog.

Installation

First, download the source code of the model from the official Github repository to the desired folder:

user@client:/$

> cd /home/username/path/to/desired/folder/

> git clone https://github.com/aime-labs/stable-diffusionOn AIME servers and workstations, the rest is quite simple thanks to the AIME MLC framework: In the terminal, you can create a new Docker container where the PyTorch 2.0.1 framework, the CUDA drivers and everything else needed to run Stable Diffusion are already preinstalled, just by typing:

user@client:/home/username/path/to/desired/folder$

> mlc-create stbl_diff-container Pytorch 2.0.1-aime -w=/home/username/path/to/desired/folder/Afterwards, just open the container:

user@client:/home/username/path/to/desired/folder$

> mlc-open stbl_diff-containerThe rest then takes place within the container. The workspace of the container represents the directory of the host system linked to the container. Change to the root directory of Stable Diffusion:

[stbl_diff-container] user@client:/workspace$

> cd stable-diffusionSince the ML container itself already represents a virtual development environment, we can completely dispense the activation of a virtual environment such as venv or conda env. Therefore, only a couple of lines of code are needed to install the dependencies of Stable Diffusion on an AIME Machine Learning Container:

[stbl_diff-container] user@client:/workspace/stable-diffusion$

> sudo apt-get install libglib2.0-0 libsm6 libxrender1 libfontconfig1 libgl1

> sudo pip install -r requirements.txtLastly, download the Stable Diffusion model. The model is Licenced with the CreativeML Open RAIL++-M License. Unzip the file and put it in the right directory.

[stbl_diff-container] user@client:/workspace/stable-diffusion$

> curl -o sd-v1-5.zip http://download.aime.info/public/models/stable-diffusion-v1-5-emaonly.zip

> sudo apt install unzip

> unzip sd-v1-5.zip

> rm sd-v1-5.zipEverything is set up and Stable Diffusion is ready for use! The model weights 'v1-5-pruned-emaonly.ckpt' and the licence are in the folder '/stable-diffusion-v1-5-emaonly/'. In the examples below this checkpoint is choosen by default. To use another checkpoint, add the flag –ckpt followed by the destination of the checkpoint file.

Note that the configuration should only be executed when a new container is created. Otherwise, just open the container with mlc-open stbl_diff-container (if not already running). Then navigate to the root directory with '> cd stable-diffusion' to use the model.

Image Generation

There are 2 methods of creating images:

- Single image generation using direct prompt input

- Bulk image generation using a list of prompts

Let's check them out one by one:

1. Single Image Generation with Direct Prompt

To generate an image, run the following command in the terminal in the stable-diffusion directory:

[stbl_diff-container] user@client:/workspace/stable-diffusion$

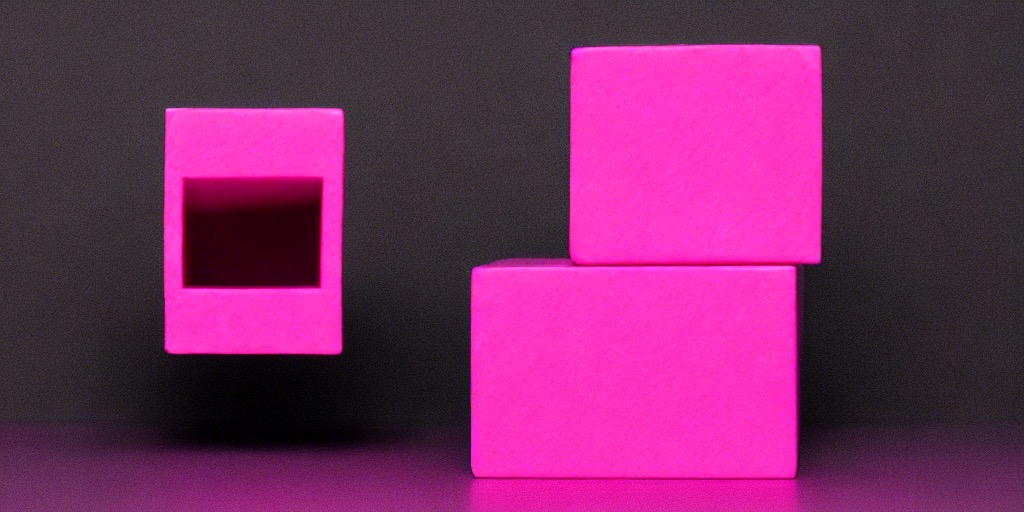

> python scripts/txt2img.py --prompt "a photo of a pink cube, black background" --plms --skip_grid --n_samples 1The generated image is saved by default in the directory /home/username/path/to/desired/folder/stable-diffusion/outputs/txt2img-samples/

![[Image of a simple pink cube generated by Stable Diffusion]](https://www.aime.info/static/img/stable_diffusion/00021.jpg)

To further influence the process of image generation, Stable Diffusion offers more input parameters that are described in detail below. Here is another example where the dimensions (W, H) and sampler are changed:

[stbl_diff-container] user@client:/workspace/stable-diffusion$

> python scripts/txt2img.py \

--prompt "a photo of a pink cube, black background" \

--W 768 --H 512 \

--plmswhich generates the following image:

2. Bulk Image Generation with List of Prompts

If you want to generate a large number of images at the same time, using batch generation saves a lot of time. This is because the model remains loaded in the GPU memory. Otherwise, the complete model has to be reloaded into the GPU memory again with each individual script call.

Before running the script, create an appropriate text file prompts.txt in the root directory. This file should contain one prompt per line. Note that the prompts don't require quotation marks / inverted commas. The additional parameters are explained below.

[stbl_diff-container] user@client:/workspace/stable-diffusion$

> python scripts/txt2img.py \

--from-file prompts.txt \

--outdir outputs/bulk-images \

--skip_grid \

--ddim_steps 100 \

--n_iter 3 \

--W 512 \

--H 512 \

--n_samples 3 \

--scale 8.0 \

--seed 119 Benchmarking

A small benchmark comparison is given to test the GPU performance for generating images. This will generate one image using the PLMS sampler in 50 steps. The first two iterations are warm-up steps. The following table lists the processing times for different GPUs generating an image with the following prompt:

[stbl_diff-container] user@client:/workspace/stable-diffusion$

> time python scripts/txt2img.py \

--prompt 'a photograph of an astronaut riding a horse' \

--scale 7.5 \

--ddim_steps 50 \

--n_iter 3 \

--n_samples 1 \

--plmsComparing different GPUs like RTX 3090, RTX 3090Ti, A6000 and A100 produces the following results:

In the following we list the above results together with the loading time of the model as a table for comparison:

| GPU TYPE | TIME TO LOAD MODEL | PROCESSING TIME | TOTAL TIME |

|---|---|---|---|

| RTX 3090 | 12,95 s | 5,64 s | 18,59 s |

| RTX 3090Ti | 12,24 s | 4,95 s | 17,19 s |

| A5000 | 13,06 s | 6,07 s | 19,13 s |

| A6000 | 13,35 s | 5,83 s | 19,18 s |

| A100 | 12,35 s | 4,35 s | 16,7 s |

By default Pytorch uses float32 (full precision) to represent model parameters. On GPUs with less memory it can run out of memory (OOM). A possible solution to this problem is to automatically cast your tensors to a smaller memory footprint like float16. It requires about 10 GB VRAM with full precision and 9 GB with autocast precision. The latter optimizes memory utilization and is faster, but performs at a slightly lower precision.

Parameters

Stable Diffusion comes with plenty of different parameters you can play around with. The quality of the output images depends heavily on the textual description and the parameters. The default parameters generate good images for most of the prompts. A good start is to play around with the prompt and different seeds. First, there is a quick overview of the parameters used in the script. Next, we take a deeper dive into the parameters and explain their functionality, illustrated by examples.

The script can be adapted to your own ideas with several command-line arguments. This can also be done by typing >python scripts/txt2img.py --help.

| ARGUMENT | DESCRIPTION |

|---|---|

| --prompt | "English phrase in quotes" describes the generated image. Default is "a painting of a virus monster playing the guitar". |

| --H | Integer specifies the height of the image (in pixels). Default is 512. |

| --W | Integer specifies the width of the image (in pixels). Default is 512. |

| --n_samples | Integer specifies the batch size, i.e. how many samples are to be generated for each given prompt. Default is 3. |

| --from-file | "file path" specifies the path file containing the prompt texts for bulk image generation. One prompt per line. |

| --ckpt | "file path" specifies the path to the model checkpoint. Default is "models/ldm/stable-diffusion-v1/model.ckpt". |

| --outdir | "file path" specifies output directory where generated images are saved. Default is "outputs/txt2img-samples". |

| --skip_grid | Flag to omit the automatic generation of the overview image. This image is generated by default and shows all generated mages in a grid. |

| --ddim_steps | Integer specifies the number of sampling steps in the diffusion process. Increasing the steps improves the result, but also increases the calculation time. The model converges after a certain amount of steps, so more steps won't have any effect. Default is 50. |

| --plms | flag to use PLMS sampling |

| --dpm_solver | flag to use DPM sampling, much faster than DDIM or PLMS and converges after fewer steps. |

| --n_iter | Integer specifies how often the sampling loop should be executed. Effectively the same as n_samples, but use this instead if an OMM error occurs. Default is 2. |

| --scale | Float specifies the Classifier Free Guidance (CFG). This is sort of the "Creativity vs. Prompt Scale". The lower, the more AI creativity. The higher, the more to stick to the prompt. Default is 7.5. |

| --seed | Integer specifies a random start value to be set. Can be used for reproducible results. Default is 42. |

| precision | Evaluate at full or autocast precision depending on your available GPU memory. Default is autocast precision. |

Prompt Format

The content of the generated image depends on how well you describe your idea. Some already call it prompt engineering because it holds so many parameters. There are a few factors to consider. First, the priority of the words, the first-ranked words are more important. Second, are the modifiers used in the prompt. Do you want a painting or a photograph? What kind of photograph? Art Mediums, Artists, Illustrations, Emotions, and Aesthetics. Lastly, there are the magic words that really bring your image generation game to the next level:

- Improve quality, contrast and detail:

"HDR, UHD, 8k, 64k, highly detailed, professional, trending on artstation, unreal engine, octane render" - Add texture and improve lighing:

"studio lightning, cinematic lightning, golden hour, dawn" - Add life:

"vivid colors, colorful, action cam, sunbeams, godrays" - Blur background and highlight the subject:

"bokeh, DOF, depth of field" - For historic alike photos:

"high-resolution scan, historic, 1923" - ...

For a deeper dive into prompt engineering, this prompt book from Open Art gives a fantastic overview with a ton of examples. The following examples show the difference in lighting, generated by the prompt.

A Affenpinscher dog sitting in the middle of a street of a TV tower Berlin

with sampler DPM++ at 20 DDIM steps, CFG 11.0 and seed 927952353

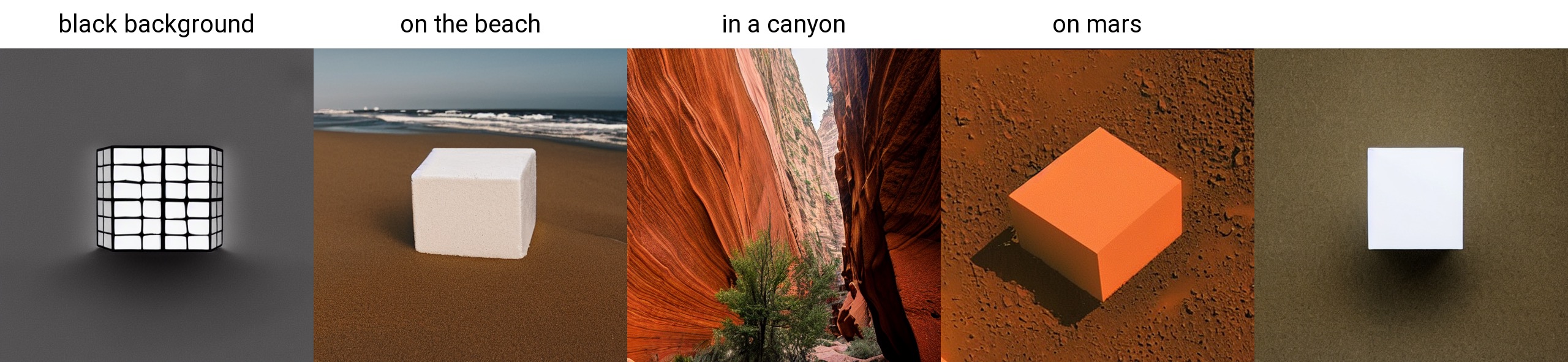

Even the simple example of the cube shows how much the image content changes with simple adjustments to the prompt text:

Resolution

The quality of the output images depends heavily on the description and the displayed image context in relation to the image dimensions. In other words, if one wants to create a portrait (image ratio < 1), but specifies the image dimensions as a landscape (image ratio > 1), the generated image content may not deliver the expected result. Larger images have a higher quality and correspond more to the prompt description. But often the interpreted context is then repeated - so in the cube example with dimensions of 1024x512 pixels (landscape), several cubes are created at once. Note that the dimensions must be adjusted by multiples of 8. Thus, values such as 512, 576, 640, 704, 768, 832, 896, 960, 1024, etc. are possible.

CFG

The Classifier Free Guidance (CFG) or scale is the balance between the 'creativity' of the AI itself and the prompt. Here is a recommended use for CFG:

- CFG 0-2: AI will completely ignore the prompt.

- CFG 2-6: Let AI do the creative work.

- CFG 7-10: Recommended for most prompts, prompt and AI are working together.

- CFG 10-15: Follows the prompt more. only recommend when prompt is specific enough.

- CFG 16-20: Only follows the prompt, must be very detailed. Might affect coherence and quality.

The following series of images shows how the image content changes with the value for CFG scale

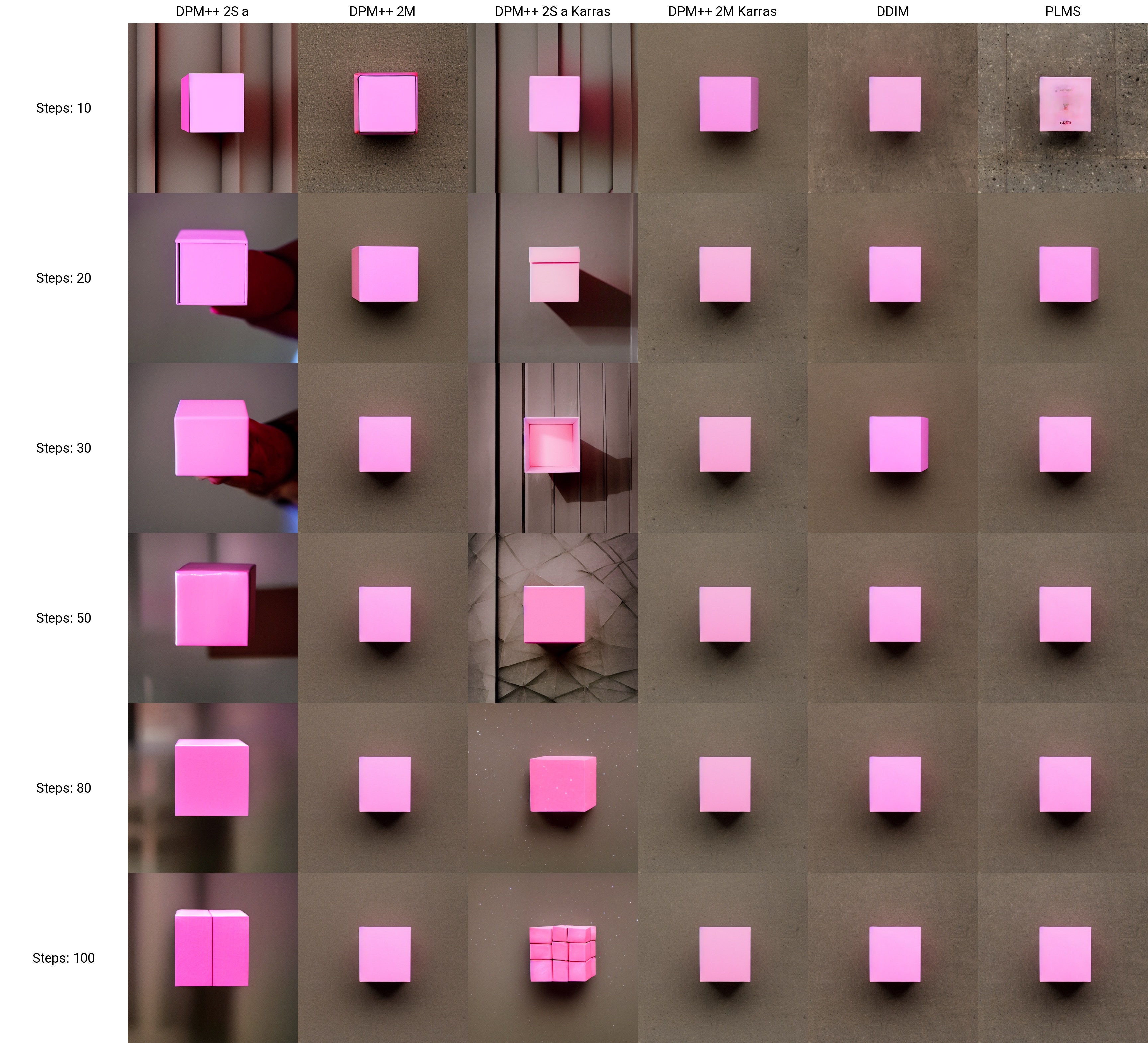

Sampler

A sampler reconstructs the image in N sampler steps. Start off with a fast sampler at low steps to get a first view of what your prompt should look like with different seeds. Then you can experiment with the step value and different samplers to improve your image (while keeping the selected seed constant). As seen in the example, the samplers are quite alike, but each sampler has its own touches. It is more about speed and the minimum step value to get a quality image.

What is a Sampler?

Stable diffusion is a latent diffusion model. In short, this model will create an image from text out of noise. In the training phase, a diffusion model gradually adds noise to an image and then tries to remove it. So actually it is trained to remove noise from an image while conditioned on text. At each step, an image of 1024x1024 pixels is generated with less noise than before.

Generating such a big image requires a lot of computation. There the latent part comes into play, it converts the input image to a lower dimension and converts the output denoised image back to a higher resolution after the diffusion process. Because of this, the process doesn't require a ton of GPU power.

During inference, the model reconstructs an image from noise and your text input in N sampler steps. At each step, a scheduler or sampler computes a predicted denoised image representation in the latent space.

The table below shows a list of samplers with a description of their differences.

| SAMPLER | DESCRIPTION |

|---|---|

| DDIM | Denoising Diffusion Implicit Models. This sampler creates acceptable results in as little as 10 steps. It works perfectly for generating new prompt ideas at a fast rate. |

| PLMS | This sampler gives fast sampling while retaining good quality. More info in the original paper Pseudo Numerical Methods for Diffusion Models on Manifolds |

| DPM | Denoising Diffusion Probabilistic Models. The DPM-solver is a fast dedicated high-order solver. It is suitable for both discrete and continuous-time diffusion models without any further training. This can reproduce high-quality in only 10 to 20 steps. More info is found in the paper. This sampler shows the same results as a DDIM sampler but converges after fewer steps. This is the most recent sampler that benefits both speed and quality. |

| LMS | Linear Multistep Scheduler for k-diffusion. Gives good generations at 50 steps, if the output looks a bit cursed, you can go up to 80 or something. 8-80. |

| Euler | This is just as the DDIM sampler, this is a fast sampler with great results at low steps. This is a wild sampler because it changes generation style very rapidly as seen in the example. |

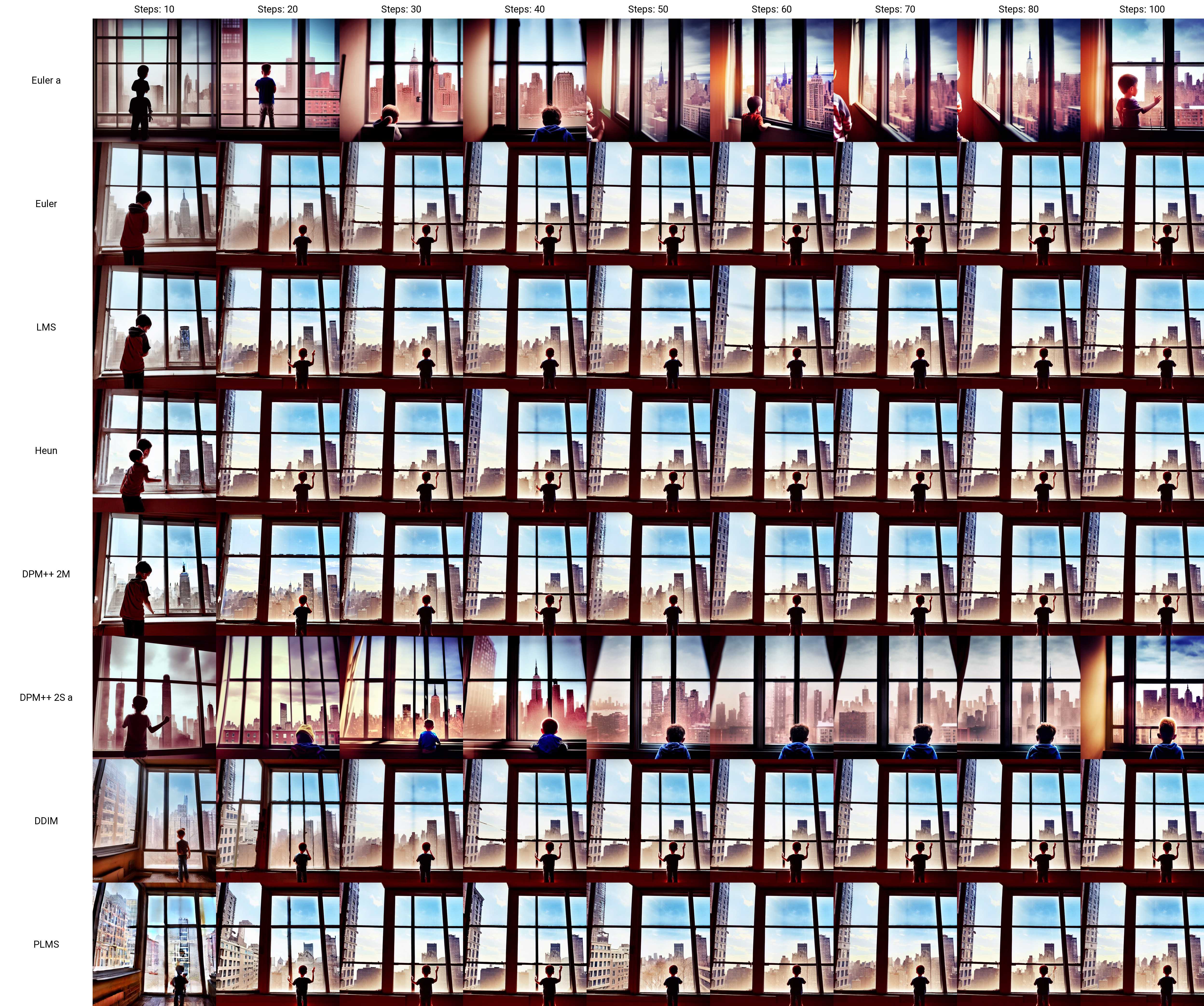

The image grids below demonstrates the differences of the generated images for the cube example depending on the selected DDIM step size and the sampler being used.

Another grid shows a steps over grid overview for the prompt

Young boy looking out of the window of an apartment in the middle of New York, post-apocalyptic style, peaceful emotions, vivid colors, highly detailed, UHD

With the following tool you can explore different samplers and DDIM step sizes interactively:

Related Links

In the following we list further links for a deeper understanding of stable diffusion and target-creative prompt generation

Background Knowledge

- Original Stable Diffusion repo

- Explanation of Stable Diffusion

- Prompt Book with a visual explanation of different prompts and parameters

Explore the LAISON database

Explore prompts

Videos

Conclusion

Image-generating AI models are evolving super fast, with new applications and lighter and faster models coming up every week. We're excited to see what's next on AIME workstations and servers. Stable Diffusion provided an open source model and we explained how to set it up on your local machine with an AIME MLC. You can just use the model with the default parameters, but it's fun to play around with the prompts and parameters to create nice images. Don't get lost in parameter adjustment to create the perfect image you have in mind. Start with a fast sampler and low steps, and experiment with the command prompt and different seeds to get an idea. Then change other parameters like CFG, different samplers and higher step values.